Dataset Integrity Assurance Note for 355632611, 632083129, 22915200, 662912746, 3522334406, 25947000

Dataset integrity is a critical aspect of effective data management, particularly for identifiers such as 355632611, 632083129, 22915200, 662912746, 3522334406, and 25947000. Maintaining accuracy and reliability in these datasets requires systematic validation techniques and regular audits. Failure to uphold these standards can lead to significant consequences. Understanding these implications and exploring effective strategies can help organizations navigate the complexities of data governance.

Importance of Data Integrity

Data integrity is crucial for ensuring the accuracy and reliability of information, as even minor discrepancies can lead to significant errors in decision-making processes.

Maintaining data accuracy requires rigorous validation techniques, which confirm that data is both correct and consistent. Such methodologies empower organizations to make informed choices, thereby fostering an environment of transparency and accountability that aligns with the desire for freedom in data-driven initiatives.

Methods for Ensuring Integrity

Ensuring integrity within datasets requires the implementation of systematic methodologies that rigorously monitor and validate information.

Effective data verification techniques, such as periodic audits and automated checks, play a critical role.

Additionally, checksum validation serves as a robust mechanism to detect alterations or corruption, ensuring that the data remains accurate and reliable.

These methods collectively fortify the integrity and trustworthiness of datasets.

Consequences of Compromised Data

When datasets are compromised, the repercussions can extend far beyond immediate inaccuracies, impacting decision-making processes and undermining organizational trust.

Data breaches can result in significant financial losses, legal repercussions, and operational disruptions. Trust erosion among stakeholders often follows, compounded by reputational damage.

The cascading effects of compromised data emphasize the critical need for robust data integrity measures in any organization.

Best Practices for Data Management

Implementing best practices for data management serves as a cornerstone for maintaining data integrity and reliability.

Effective data governance frameworks ensure accountability and compliance, while robust metadata management facilitates accurate data categorization and retrieval.

Conclusion

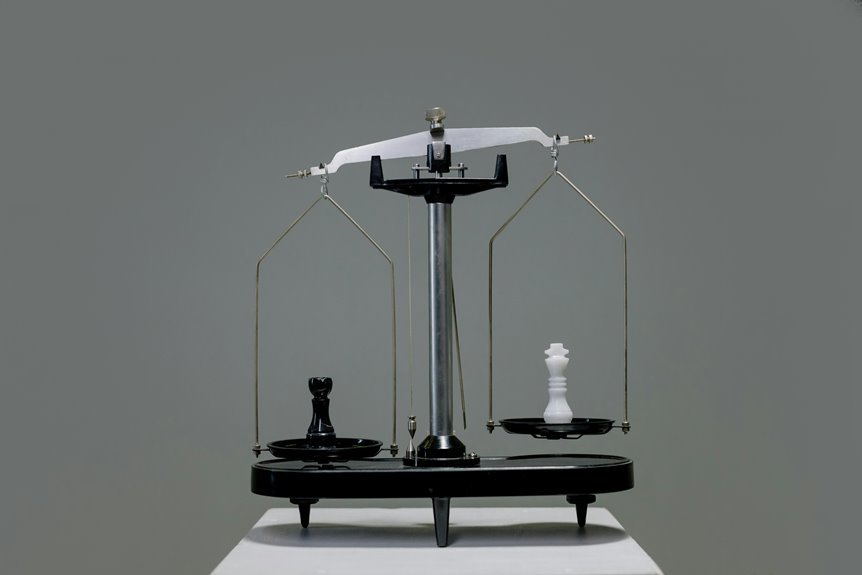

In conclusion, safeguarding dataset integrity is akin to fortifying a dam against potential floods; the strength of the data governance framework directly influences the reliability of the information held within. By implementing robust validation techniques and conducting regular audits, organizations can effectively shield themselves from the risks associated with data corruption. Maintaining these best practices not only preserves the integrity of the datasets but also fosters trust and informed decision-making, ensuring that the foundation of data remains unyielding.